S3-Compatible Object Storage for African Developers: No Egress Bill Shock

Published: · Updated: · 4 min read · By Oluniyi D. Ajao

Evaluating alternatives to Amazon S3? The S3 API is the de facto standard for object storage: nearly every developer tool has S3-compatible integration built in, and reaching for boto3 or s3cmd is muscle memory for most. But for workloads African developers typically run, the dominant provider's pricing model is hard to live with.

A WordPress media library for a site serving Nigerian readers. A Postgres backup being restored to a staging box in Johannesburg. A video-on-demand service for audiences in Kenya. In all three, the dominant cost is egress: bandwidth leaving the bucket to the consumer or the restore target: and the major providers' egress pricing is genuinely punishing. Typical rates run $0.08–$0.12/GB for the first few terabytes, dropping at petabyte scale, and higher from African-region deployments. A popular e-commerce product page with a few images can leak hundreds of dollars a month in egress without anyone noticing until the bill arrives.

This is the gap an S3-compatible alternative can fill: the same API developers already know, the same durability class, but with a pricing model that doesn't bleed on bandwidth.

What a real S3-compatible alternative needs to deliver

Three technical requirements and one commercial one:

- S3 API compatibility. Not "we have an object store with a different API": actual S3 API compatibility. The point is that every SDK, every CI/CD pipeline, every backup tool speaks it natively. If you have to rewrite code to adopt a different API, the switching cost wipes out the savings.

- Durability at the same order of magnitude. The leading service quotes 99.999999999% (eleven nines) durability via multi-AZ replication. An alternative doesn't need to match that exactly: nine-nines is plenty for most workloads: but it does need to be in the same conversation. A single-machine NAS with RAID-6 doesn't qualify.

- Read-after-write consistency. The incumbent has offered strong read-after-write consistency since 2020. Alternatives still using eventual consistency will bite you on any workflow where a newly-uploaded object is immediately read: which is most workflows.

- Predictable bandwidth pricing. The whole point. If the alternative meters bandwidth at hyperscaler-grade rates, there's no reason to switch. Flat-rate included bandwidth, or a clear tiered model, is what makes the math work for African-audience workloads.

AFRICLOUD object storage as an alternative

AFRICLOUD offers S3-compatible object storage from our Lisbon and Johannesburg data centres: the two locations we run cloud VMs from. The endpoint speaks the S3 API, so existing tooling works unchanged: aws-cli with an endpoint override, boto3 with endpoint_url set, rclone, Duplicati, the S3 adapter in any modern CMS. Durability is multi-replica within the data centre; the access pattern is identical to standard S3-compatible services.

For African-audience workloads specifically: serving media to Nigerian, Ghanaian, or Kenyan users from our Johannesburg endpoint routes via NAP Africa (the continent's largest IXP, with 580+ peered networks) rather than bouncing the traffic back to a distant region. For European and North African audiences, Lisbon is the geographically shorter path: Morocco, Tunisia, and Egypt all run at sub-70 ms from our Lisbon endpoint.

Where the leading service still wins

Three genuine cases for staying on the incumbent:

- Deep ecosystem integration. If your pipeline relies on object-store events triggering serverless functions or ML training jobs in the same provider's ecosystem, migrating the object storage alone creates cross-cloud egress that wipes out savings.

- Global edge delivery. A tightly-integrated CDN plus object store gives you continent-spanning edge presence with one click. If your audience is globally distributed and you need edge in every region, an alternative with fewer PoPs is genuinely slower.

- Cold-tier archival pricing. For genuinely cold data accessed less than once a year, the deep-archive tiers at around $0.001/GB/month are hard to beat. An object-storage alternative without a cold tier costs more for this specific case.

Migration path

For most African-audience buckets, migration is a single rclone sync command and an application-level config update. Three steps:

- Configure rclone with both the source and target S3-compatible endpoints. Standard

~/.config/rclone/rclone.confwith two remotes. - Sync the bucket.

rclone sync source:your-bucket target:destination-bucket --progress. The source egress charge is real (one last bill on the way out), so for large buckets, budget for it. - Update your application. Change the endpoint URL in your config to point at the new provider. For most SDKs this is a single environment variable or one line of code (

boto3.client("s3", endpoint_url=...)).

Test with a small read-only bucket first, verify the access patterns match, then migrate production. If you're using pre-signed URLs for downloads, double-check those work against the new endpoint: they should, since it's the same API, but credential rotation is worth verifying before you cut over.

See our Object Storage page for current pricing and quickstart, or contact us to discuss migration specifics for your workload.

Related Articles

NVMe and Cloud Storage: Why Storage Type Matters for Your VPS

Apr 14, 2026

Cloud Alternative for African Developers: What to Consider Before You Migrate

Apr 23, 2026

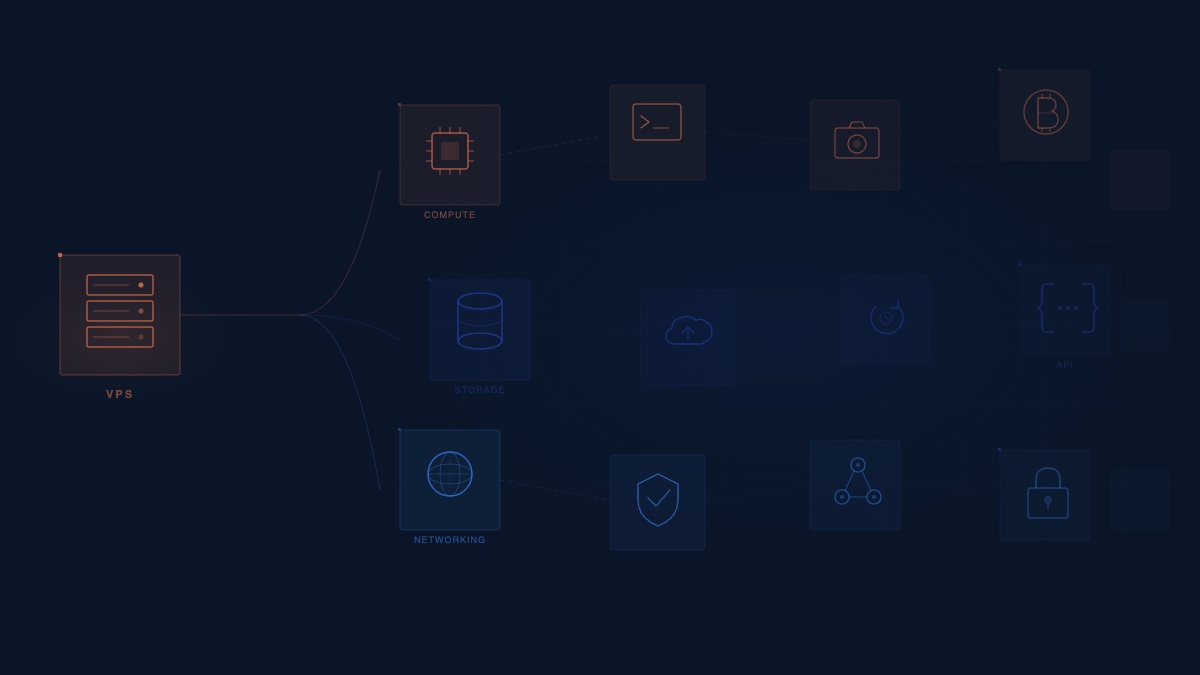

AFRICLOUD Evolves From VPS Hosting to a Full Cloud Infrastructure Platform

Mar 2, 2026

NVMe Storage Essential for African E-Commerce Speed

Sep 25, 2025